Technology Assisted Review Models: Let's Move Forward

It was exciting to see the number of significant conversations about technology-assisted review (TAR) and analysis at LegalTech New York, even though the industry still hasn’t settled on standard terminology. Nonetheless, whether they’re calling it TAR, Computer-assisted Review (CAR), Predictive Coding (PC), or any other acronym referring to this technology, people are taking notice and trying to understand how to implement this beneficial tool in their everyday litigation process.

Understanding the Basics of Technology-Assisted Review

Before you can implement any new solution it’s important to understand the basics. While the specific algorithms behind this technology can be highly technical when discussed in-depth, those details are typically less important than the process used to implement the technology. Therefore, a higher-level understanding is paramount to appropriately implementing TAR or any existing technologies on the market today.

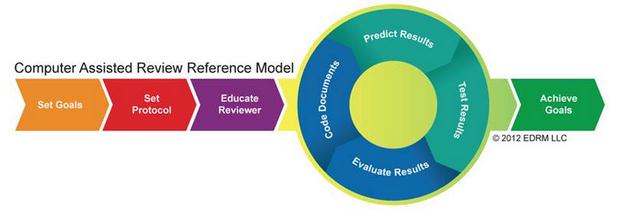

The Electronic Discovery Reference Model (EDRM) was one such model to emphasize quality processes, and it has proven extremely successful in guiding ediscovery best practices through all stages of litigation. Given the success of this model, the EDRM search sub-team has moved forward with another visualization that aims to demystify TAR with a draft of the Computer Assisted Review Reference Model (CARRM).

I am proud to be a member of the team that worked to put this reference model together. The group was composed of people with diverse backgrounds—including both vendors and end-users—and we were all pleased to find that the overarching visualization covered the solution well, regardless of technical implementation.

The CARRM identifies eight critical steps in the TAR process

First and foremost, the CARRM strongly emphasizes a planning process, during which the review team should (1) determine their desired outcome in leveraging TAR; (2) build rules and methodologies for the humans and the technology specific to the case; and (3) convey those rules and methodologies to the reviewers involved in the TAR process.

Next, the CARRM identifies a process of coding documents, from which the technology learns and classifies other documents in the corpus, followed by human testing and evaluation. This process is iterative, meaning these steps should continue in a cycle until appropriate retrieval metrics (such as precision, recall and f-measure) are achieved and the initial goals are met. Once the evaluation stage is complete, the TAR process can end and the team may move on to the next phase of review.

The timing must be right to start visualizing the high level process because several other illustrative models emerged in the past year (each built without knowledge of the other models):

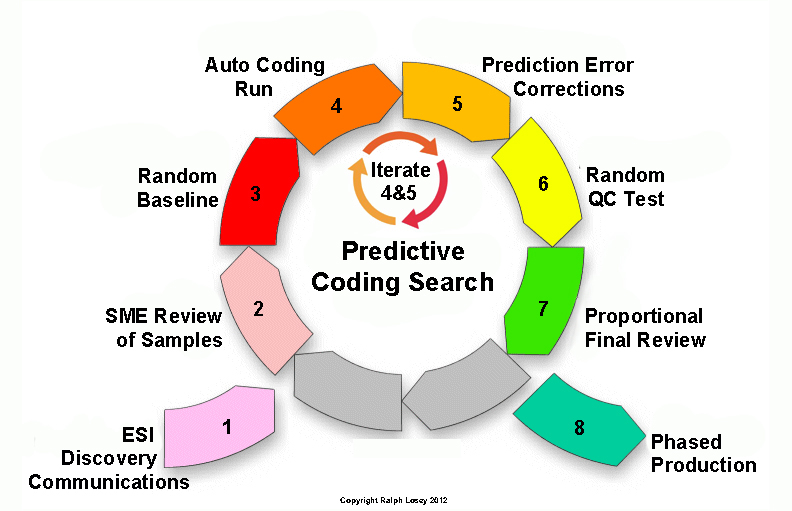

- Ralph Losey of e-Discovery Team and Electronic Discovery Best Practices released a Predictive Coding graphic identifying technical steps between initial ESI communications and phased production

While the models have differences, mainly in terminology and aesthetics, all models bear important similarities. Namely, each model illustrates the importance of:

- thorough planning;

- employing a cyclical process with significant interaction between the human reviewer and the technology; and

- evaluating the results of such interplay before moving on to the next stage.

Like the ongoing debate about vernacular, the primary differences between these models are mostly a matter of semantics that will resolve themselves with time, familiarity and, eventually, increased standardization. In the meantime, pay close attention to the standards and principles advanced in each model and adhere to them when evaluating the viability of TAR for a specific case.

Most importantly, the EDRM group is looking for feedback on their model, and I highly encourage you to review their model and respond with support for the model, questions, or ideas for improvement. The sooner we can all agree on the overarching process for implementing this technology, the sooner we can focus applying this solution to everyday processes and reap the benefits of this new technology. Please send any feedback about the CARRM draft directly to mail@edrm.net.